…and the challenges that we face when bringing in change and enforcing it

For this post we are going to have a look at how the Sports UI team within bet365 handle the rapid pace of change in technology, what happens when it doesn’t go well and how we have reacted to this to allow success in the future.

Being a company focused on technology we are all too aware that the landscape of technology is constantly moving. Today the flavour of the month could be Angular2, which could flip entirely to ReactJS the next month and then next month it moves again to VueJS.

We have previously discussed our reasons for not using public frameworks, adopting TypeScript and managing our front-end codebase end-to-end in-house. As part of adopting these patterns we had to overcome many challenges and most of them were in-fact not due to technology, but people.

Some History

Historically, the bet365 Mobile site has been exclusively on the Microsoft stack, primarily using VB.NET. We had a new challenge where we had to bring developers, most of whom had never heard of TypeScript, into a new way of thinking – Client side rendering.

Our move in technology from VB.NET to TypeScript was never going to be easy. Our developers had spent years working with a system where mark-up was delivered from the server and then updated on the client using AJAX, JQuery and JavaScript (resulting in some very big files), and we were now going to do it all on the client, use a new technology and enforce Object Orientated class based patterns.

When switching between technologies the problems that we face are rarely language based – Training on syntax can be achieved easily – The obstacles are more closely tied to coding patterns, differences in performance and most importantly, mind-sets.

The Process

For the first 12 months of having TypeScript on the Mobile site, used exclusively for our In-Play product and stand-alone pods on our homepage, things seemed to be going well. Our .NET developers were picking up TypeScript well and more and more of the Mobile site was switching platform and becoming standalone TypeScript modules – Or so we thought.

When we converted our Flash site to TypeScript we introduced a number of linting (static code analysis) rules to try and alleviate some of the problems and provide automated guidance, which was completed with great success. We took the view that we would try and replicate this success by having the same set of rules and guidance for our newly developing Mobile TypeScript code base.

We were surprised by the results. Upon trying to enable our new TypeScript and CSS rules we were presenting with tens of thousands of errors. The TypeScript modules were interlinked back with the .NET website, CSS selectors had bled between the two products and the structure of the TypeScript did not meet the vision that was originally portrayed. Classes had become extremely bloated and logic was split between the JavaScript and TypeScript.

In the end we had to compromise – A subset of the rules would be enabled, but any new code that we wrote would use the full rule-set and we would treat the existing code as ‘legacy’ (a word that gets over-used by most developers and one we have learnt to use sparingly!).

We began focusing more on education and ensuring that we were empowering our team for success.

Challenges

Obstacles

18 months pass and we are now in August of 2017. Our HTML5 site has been fully integrated for 9 months and for the first time in 6 years, the Sports UI team are cross-skilled to be able to develop on both the Desktop and Mobile site now that Adobe’s Flash was no longer used. Significant progress had been made on the Mobile site with moving to TypeScript, with nearly half a million lines of code being consumed in the language – But we still had a primarily .NET Mobile site, and the amount of code being added to the .NET code base was ever growing.

We found that for every two TypeScript releases that we were doing, we were doing one release of the .NET codebase. The migration was taking place gradually, but the rate of change for .NET that we were switching away from was alarming.

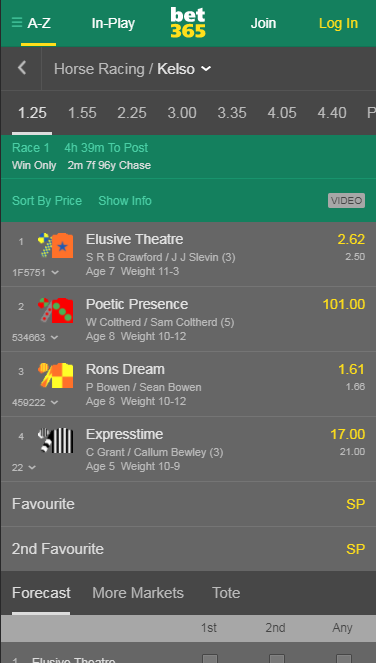

It was shortly after this that the SportsUI bet365 development team was presented with a challenge – We had to re-design the Racing section of our Mobile site before the start of the Australian racing season, only a few months away. Something that we hadn’t managed to do since introducing TypeScript onto our Mobile site in the past 2 ½ years was manage to move any full section of our pre-match site into just TypeScript.

After performing our analysis we agreed that we would be able to do it within the required dates. Our migration had gone well so far and we could build upon what already had in order to speed the process up – Or so we thought.

You may have noticed a pattern evolving here. Where our move between technologies was and where our technical leadership team thought it was were vastly different, and we were about to find out by quite how much.

Frustrations

The direction that had been laid down at the start of the project was clear – This project was to be done in just TypeScript and should have no ties to the .NET codebase. This is not what had been pushed into our source control so far.

Immediately after seeing this, we began updating our documentation and temporarily instigated weekly meetings where we would re-enforce the

vision, as well as outlining how we get there and how we can write code that doesn’t have to be interlinked. We set down rules to ensure that our communication was one-way, allowing our .NET code to call into our TypeScript code, but not the other way around.

Whilst frustrating, the process forced us to reconsider how we inform our team of change and ultimately how we go about enforcing it. We looked back over the tooling that we had in-place and decided that whilst we would continue to do training sessions, we would enhance our existing toolset to act as an early warning system.

The concept on paper sounds simple – In order to write code in a cohesive manner that any developer can pick up and work on we needed to have a number of resources available to us

- Clearly defined coding standards

- Core coding principles

- A clear vision from the technical leadership team of where we are heading

- An early warning system for problem code, ideally before hitting build

Within the UI team we have rigorous standards that we expect our developers to abide by, we wrote up our coding principles and distributed them to the team, and started weekly sessions with our developers to relay the vision down for how we expected the structure to look, as well as how we would make our codebase extensible, maintainable and performant.

Mind-sets

As we continued to evolve our products at a rapid pace and the total front-end code for our Mobile and Desktop websites nears the 2,000,000 line mark, we found that as the team continued to grow enforcing a high level of quality and consistency within a platform where we train exclusively in-house and our developers come from multiple disciplines started to become stretched.

We had to change the mind-sets of our team to ensure that we were always thinking about the right thing to do, rather than the quickest thing to do.

We looked back at static code analysis to assist us, but decided to trial taking it a step further. We were going to allow our code to fix itself when developers save their code for build. If the formatting was wrong, a semi-colon was missing, or the wrong indentation was being used, rather than just tell the developer about the problem we would automatically correct it.

Named ‘auto-fixers’, we look at some of the common problems that we had with developers coming from a .NET background and moving into TypeScript. We started using these more and more, validating folder structures, code exports, variable aliasing and much more. We invested heavily into writing many custom fixers specifically for what we needed.

These alone were never going to solve our problems, but it opened a door for us. With a code base as large as our adding a new rule to abide by would often take man-weeks to implement, having to go back and fix everything. A combination of file-by-file analysis and auto-fixers removed this barrier to entry for us.

Having a constant reminder in your console that you’re performing an incorrect action quickly moves the mind-set from one way of thinking to another, especially when combined with linking to documentation and descriptive reasons as to why it is done this way.

We also began micro training sessions, bringing only a couple of developers a time, to get an intimate session to gain an understanding of where our vision was taking us.

Soft skills

As we began to introduce more standards around our ways of working in our new technology, as well as more mechanisms for catching issues, the final piece of puzzle fell into place – Disruption.

Asking people to not only change technology, but to also change how they write code, and if they got it wrong an error appeared preventing builds, was causing disruption through misunderstanding of what was required, or through just not being aware of how things were planned to work.

We had to ensure that the team was fully on-board with the vision, and that they understood what we wanted to achieve. We had to ensure that the understanding was there that these rules were not here to slow us down, or make the job harder, but to ensure scalability of our code as we migrate more between technologies.

This meant a lot of 1-2-1 time with developers who were frustrated, showing them how the process looks when they’re not followed, or indeed when they are optional.

How far we’ve come

We’ve come a long way, and it has been a difficult journey. What was envisioned as a simple switch of technology has evolved into a journey that required new custom tooling, changing ways of thinking, adopting new coding patterns and mentoring. We are a good number of years into this process, and we’re still not at the end.

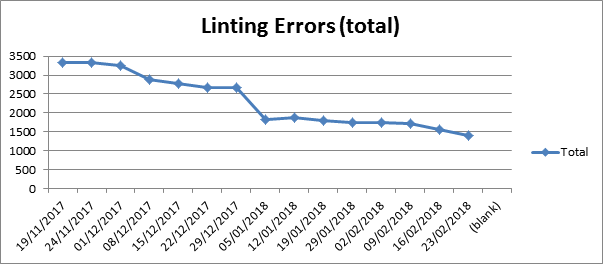

Throughout the process since introducing our more aggressive linting system we’ve kept a weekly record of how our code is conforming to our ways of working. Through a file-by-file system and having automatic fixers we’ve seen significant gains in a very short (relative to the code’s lifetime) period of time, whilst keeping the risk to our production systems minimal as the only areas touched are those that are being actively tested as part of the change.

Is it all worth it though, or would sticking with the previous technology have suited? In our opinion the technology switch has been worth it, and we are now starting to reap the rewards.